GenAI Everywhere: The Future of Edge AI Optimization with the New NetsPresso®

NP Product Team, Nota AI

The role of Edge AI is rapidly expanding.

Offline voice assistants now carry on conversations in our daily lives, vehicles infer routes in real time, and smartphones generate images without a network connection. Capabilities that once depended entirely on servers are steadily moving onto devices.

This shift also means model optimization should be changed fundamentally. Edge devices operate under strict constraints on compute and power. Running complex generative models efficiently in such environments is difficult to achieve with traditional optimization approaches.

NetsPresso®, Nota AI’s AI optimization platform, is evolving to address this change head-on. In this post, we’ll share the challenges we see emerging and the direction we’re taking.

Edge AI: A Domain NetsPresso® Has Long Focused On

The growing adoption of Edge AI is driven by clear user value.

First, lower latency. Running inference directly on-device eliminates round trips to servers, significantly reducing response time. In domains where real-time performance is critical—such as autonomous vehicles, traffic safety systems, robotics, and industrial equipment—even differences of tens of milliseconds must be carefully managed.

Second, increasing demand for privacy protection. Processing voice, text, and visual data locally allows systems to maintain stronger privacy guarantees.

Finally, reliability is essential in mission-critical systems such as autonomous vehicles and industrial robots. Reducing dependence on network connectivity directly improves system robustness. Ensuring stable operation even in unreliable network environments is becoming increasingly important.

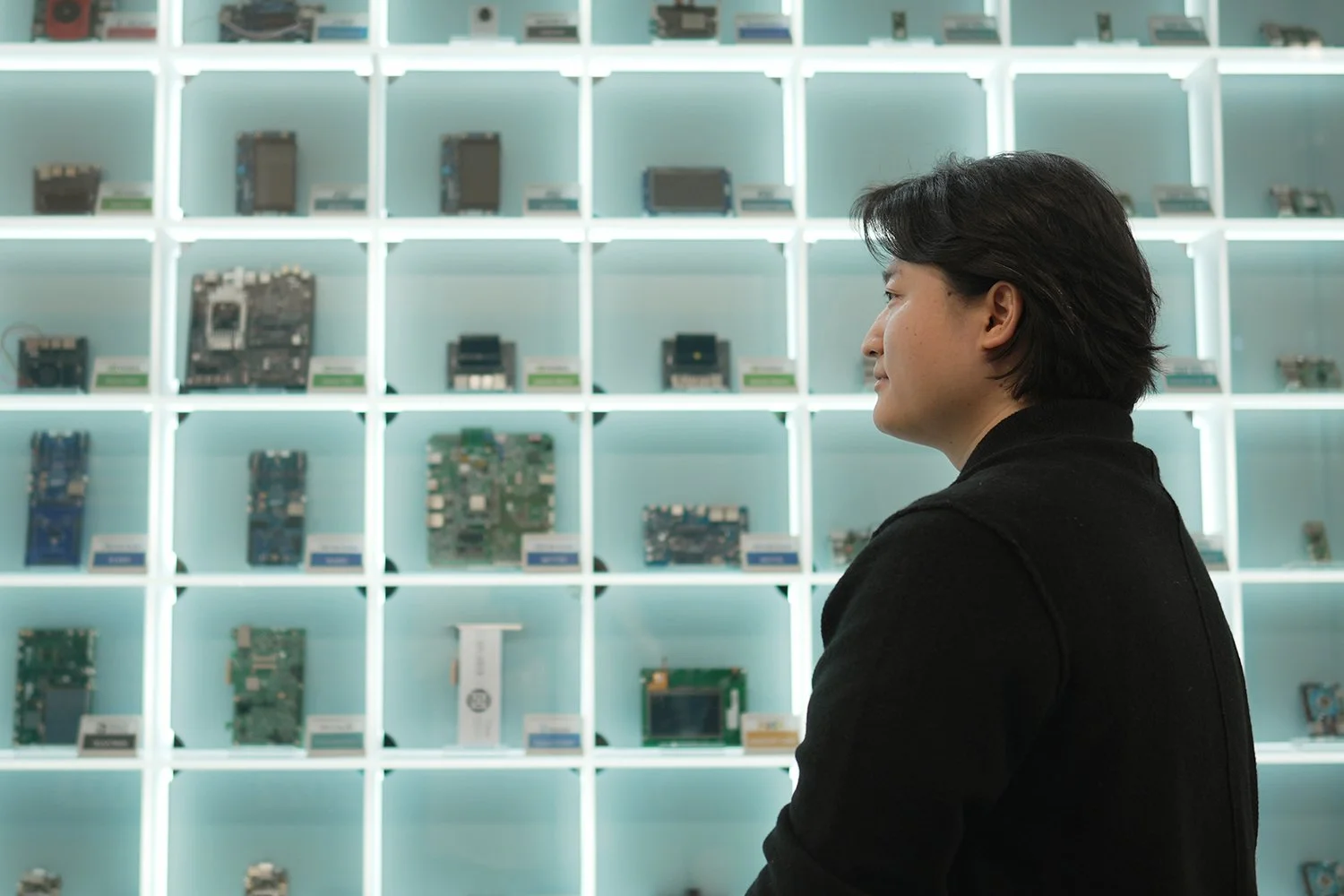

Figure 1. Device Farm at Nota AI Seoul office, featuring devices where AI models have been successfully ported by Nota AI.

NetsPresso® has focused on this space from the beginning. Our goal has been to compress deep learning models for resource-constrained devices while optimizing them to run reliably in real-world industrial environments.

Using techniques such as ONNX-based graph optimization, quantization, pruning, and filter decomposition, NetsPresso® reduces model size and computational cost while maximizing performance under target hardware constraints. Importantly, these optimizations have not remained research prototypes—they have been validated in real production deployments.

However, with the arrival of the GenAI era, the landscape has changed. Traditional approaches alone are no longer sufficient to address the requirements of emerging models and hardware platforms.

Challenges of Edge Optimization in the GenAI Era

1. Transformers Resist Universal Optimization

Historically, many models were based on CNNs (Convolutional Neural Networks). Their computation patterns were relatively regular, and input sizes were often fixed. As a result, optimization strategies such as detecting and merging specific layer patterns worked effectively.

Transformer-based generative models are different.

The computational cost and memory access patterns of attention scale directly with the input sequence length. In autoregressive inference, the KV cache grows with every generated token, causing the effective tensor shapes to change during execution. Optimization must be handled with dynamic shapes in mind.

Architectural diversity further complicates the situation. Transformer models come in many forms—encoder-only, decoder-only, and mixture-of-experts (MoE) architectures, among others—and the scale of computation varies widely depending on hidden dimensions and attention head configurations.

Within the attention mechanism itself, components such as softmax dependencies, residual connections, and causal masking introduce strong data and temporal dependencies between operations.

As a result, optimization strategies increasingly require model-specific tuning, and aggressive techniques such as operator reordering or fusion become difficult to generalize across different transformer architectures.

2. Fragmentation of Model Frameworks and Runtimes

New models implemented in PyTorch are emerging rapidly. At the same time, different hardware platforms support different frameworks, and model conversion during optimization often alters operator compositions.

Different devices support different model frameworks, and some hardware vendors provide proprietary runtime stacks, further fragmenting the execution layer.

When both model architectures and execution environments are diversifying simultaneously, designing separate optimization rules for every framework–runtime combination becomes unsustainable.

What’s needed instead is an abstraction layer capable of accommodating model-level structural flexibility while consistently supporting diverse execution environments.

3. Heterogeneous Hardware

The era when optimization only needed to consider CPUs and GPUs is over.

Today’s systems incorporate a wide range of accelerators—including NPUs, DSPs, and TPUs—each with distinct characteristics in terms of memory architecture, computational parallelism, and supported operator sets.

Even for the same model, the optimal optimization strategy can vary dramatically depending on the target hardware.

The requirement is clear:

A platform capable of optimizing

diverse models, across diverse hardware, with flexibility and consistency.

This is the direction NetsPresso® is pursuing.

The Approach Behind NetsPresso®

1. NPIR: Flexible Optimization Through an Intermediate Representation

To bridge this gap, NetsPresso® introduces its own intermediate representation: NPIR (NetsPresso Intermediate Representation).

NPIR sits between model frameworks and hardware backends through a set of conversion adapters, allowing NetsPresso® to accumulate and apply advanced optimization techniques in a unified representation.

The core design goals of NPIR are framework compatibility and hardware extensibility.

To maintain strong alignment with PyTorch, NPIR adopts Aten operators as its fundamental computation units. This preserves the flexibility of model structures while minimizing unnecessary graph distortions that often occur during optimization pipelines.

NPIR can naturally represent complex transformer architectures—including those with dynamic shapes—and can be easily extended to multiple IR formats and hardware runtimes.

Importantly, NPIR is more than a representation layer. It provides the foundation for consistently understanding and analyzing models across frameworks, and for restructuring them according to target device constraints.

This is the technical foundation behind what we call “GenAI Everywhere.”

2. Cross UI: Combining CLI and GUI

Model optimization is inherently iterative.

Engineers repeatedly adjust quantization precision, replace operations, and compare results—sometimes running dozens of experimental cycles. The key lies in interpreting results and designing the next experiment.

To support this workflow, NetsPresso® provides Cross UI, combining both CLI and GUI interfaces.

The CLI is optimized for automation and large-scale experimentation. Engineers can define multiple configurations through scripts and integrate them directly into engineering workflows.

The GUI, in contrast, focuses on analysis. Developers can visually inspect per-operation latency, SNR, and bottleneck regions, enabling a structural understanding of optimization effects.

NetsPresso® provides hardware-aware optimization recipes by default to lower the barrier to entry, while advanced users can directly tune detailed parameters. All experimental records are accumulated in a centralized dashboard, ensuring reproducibility and scalability.

As a result, engineers spend less time on repetitive experimentation and more time focusing on higher-level design decisions.

Meet the New NetsPresso®

More details about the redesigned NetsPresso® will be shared in upcoming technical blog posts.

A demo of the new NetsPresso® will be available starting in April. You can pre-register for the demo through the banner and link below. During a meeting with our team, we can also discuss how NetsPresso® integrates with your organization’s target hardware environment and runtime stack.

The updated NetsPresso®, built on NPIR, introduces more advanced graph optimization and quantization capabilities. At each stage of optimization, NetsPresso® measures both quality metrics such as SNR and benchmark performance on target hardware, enabling more quantitative decision-making during recipe design and experimentation.

Collected performance and quality data are integrated with a graph visualization module, allowing engineers to analyze the optimization process structurally. This makes it easy to identify which layers are bottlenecks, determine whether a particular optimization technique actually improves latency, and explore additional optimization options tailored to the target hardware and runtime environment.

Rather than treating optimization as a collection of isolated techniques, NetsPresso® frames it as a systematic process of experimentation, measurement, analysis, and iteration.

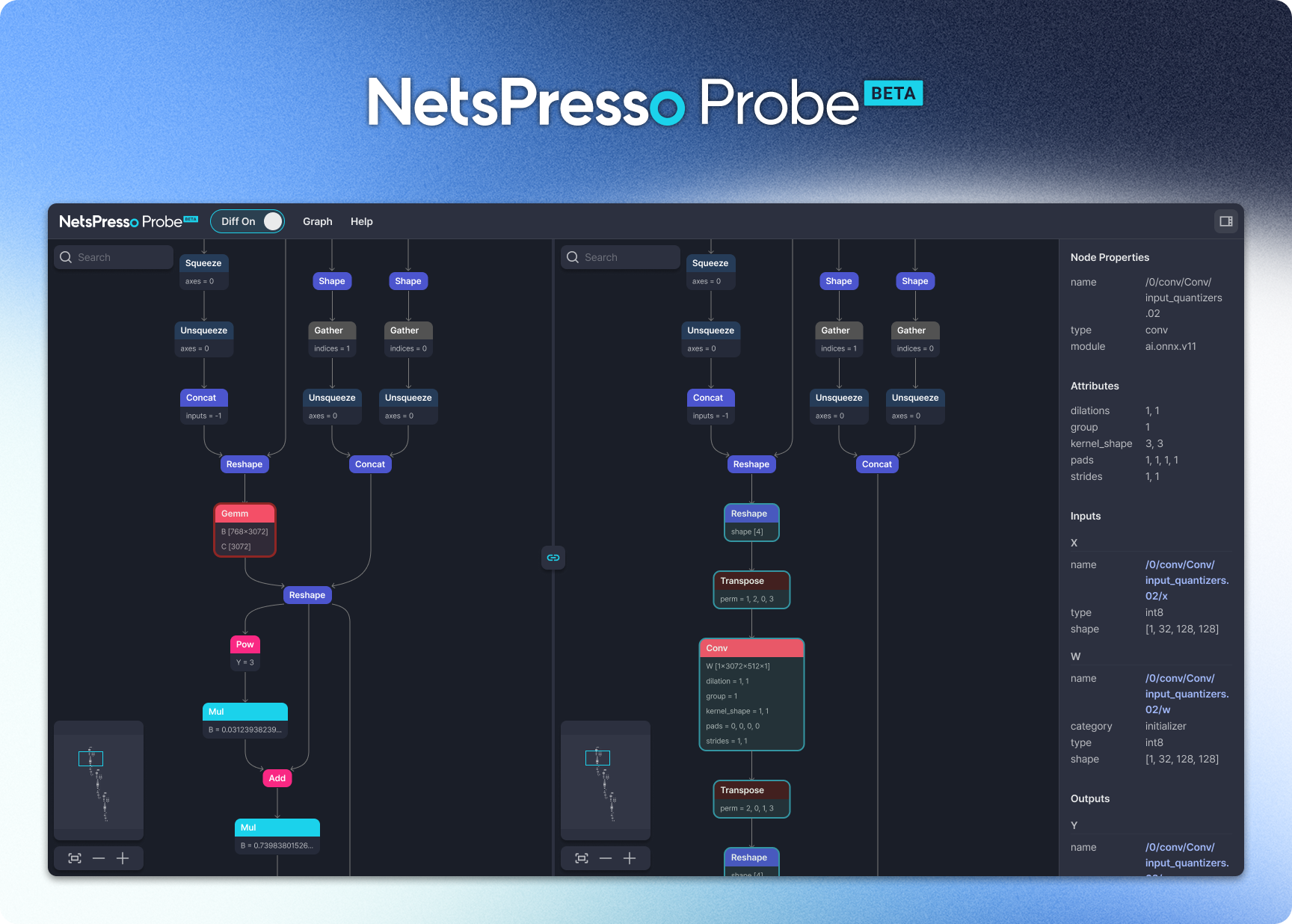

Sneak Peek: NetsPresso Probe

Ahead of the NetsPresso® update, Nota AI has released NetsPresso Probe.

Probe is a specialized tool for visualizing model graphs and comparing structural differences before and after optimization. Understanding the architecture of Transformer-based models is often challenging. To help developers better interpret their models, Nota AI has made this graph visualization capability freely available. Because understanding the model structure is the starting point for effective optimization.

Closing Thoughts

The expansion of GenAI to the edge is already underway.

The key question is no longer whether it will happen, but which models will run on which devices—and how efficiently they can do so.

With dynamic model architectures, fragmented frameworks, and increasingly heterogeneous hardware environments, edge optimization is becoming more complex than ever.

Through NPIR and Cross UI, NetsPresso® aims to address this complexity in a structured way.

Our goal is clear:

to make it possible to run diverse GenAI models on every device—and to make the optimization process far more productive.

GenAI Everywhere.

And we’re excited to begin this journey with you.

If you have any further inquiries about this research, please feel free to reach out to us at following email address: 📧 contact@nota.ai.

Furthermore, if you have an interest in AI optimization technologies, you can visit our website at 🔗 netspresso.ai.